Introduction to Applied Epistemic Engineering

What if you could debug your beliefs like software?

What is Applied Epistemic Engineering?

Applied Epistemic Engineering (AEE) is a new discipline that treats belief systems like code: codable, testable, and deployable. Its purpose is to make hidden assumptions visible, stress-test them under adversarial conditions, and redesign them for resilience.

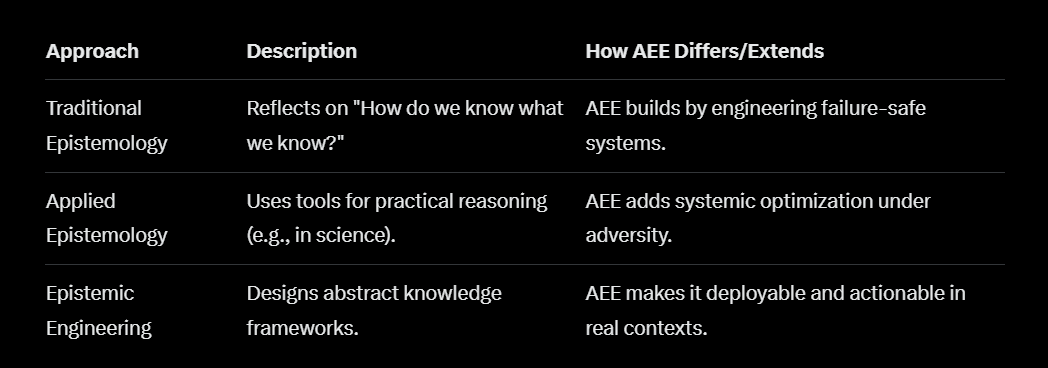

To see where AEE fits, it helps to compare it with related approaches:

Where traditional epistemology reflects by asking, “How do we know what we know?” AEE builds by asking, “How do we engineer belief systems so they fail safely and recover quickly?”

Applied Epistemology Uses epistemic tools to clarify reasoning in practice (e.g., in science, law, or ethics). Valuable, but still primarily analytic rather than systemic. AEE takes that foundation and pushes it further, optimizing reasoning under extreme conditions.

Epistemic Engineering Designs frameworks for improving knowledge systems, often abstract or theory-driven (e.g., formal logic, Bayesian methods). AEE differs by being deployable and actionable: it is not just about designing frameworks, but about stress-testing and rebuilding beliefs in real-world contexts.

It operates as:

A debugging tool for conceptual ambiguity.

A systems discipline for optimizing decision-making under uncertainty.

A resilience framework that prevents system-wide breakdown of decision reliability in adversarial environments.

AEE is like playing poker with your cards face up, but the cards are your beliefs, and the game is truth. You don’t bluff. You justify. You invite critique. And you rebuild stronger every time.

At the core, AEE is a modular epistemic protocol loop:

Frame: Define the claim, context, and scope.

Disassemble: Break the claim into falsifiable components.

Stress-test: Run adversarial simulations, contradiction hunts, and moral audits (systematic checks for whether a belief or policy holds up under different ethical frameworks, not just logical ones).

Reconstruct: Rebuild the claim with stronger logic, modular upgrades, or discard it entirely.

📖 A Simple Example

Imagine a kid says: “I believe monsters live under my bed.”

Let’s run that belief through the AEE loop:

1. Frame

We ask: What exactly is the claim? → “Monsters live under my bed at night.”

2. Disassemble

We break it down: → What is a monster? → When do they come? → What do they do?

3. Stress-test

We test the claim: → Shine a flashlight under the bed. → Check every night for a week. → Ask what would prove there are no monsters.

4. Reconstruct

We rebuild the belief: → “There are no monsters under my bed—but sometimes I imagine scary things when it’s dark.”

Now the belief is stronger, clearer, and less scary.

📖 A Complex Business Example

Scenario: A mid‑sized tech company believes: “Expanding into international markets will guarantee higher profits.”

Let’s run this belief through the AEE protocol:

1. Frame

Define the claim clearly. → “If we expand into international markets, our company will achieve higher profits within two years.”

2. Disassemble

Break the claim into testable components:

What counts as “international markets”? (Europe? Asia? Latin America?)

What does “higher profits” mean? (Revenue growth? Net margin? ROI?)

What assumptions are baked in? (Stable exchange rates, low regulatory barriers, scalable operations.)

3. Stress‑test

Run adversarial simulations and contradiction hunts:

What if tariffs rise or trade restrictions tighten?

What if local competitors undercut pricing?

What if expansion costs (legal, cultural adaptation, logistics) outweigh revenue gains?

What if the domestic market still has untapped growth potential?

This stage might involve scenario modeling, red‑team analysis, or financial simulations.

4. Reconstruct

Rebuild the belief with stronger logic and modular upgrades: → “International expansion may increase profits, but only if we first validate demand in target regions, model regulatory and currency risks, and pilot with a limited rollout. Profits are not guaranteed; they are contingent on specific conditions we can test in advance.”

Outcome: Instead of a brittle, overconfident belief (“expansion guarantees profit”), the company now has a resilient, testable strategy: expansion is treated as a hypothesis to be validated, not a foregone conclusion. This prevents costly overextension and aligns decision‑making with reality under strain.

Why It Matters

AEE matters because it prevents brittle belief systems from collapsing when challenged, making individuals and institutions more adaptive. Unlike pure theory, AEE is built for execution. It uses logic, adversarial modeling, and empirical data to:

Expose structural falsehoods.

Forecast robust outcomes.

Reframe incentives toward ‘everybody wins’ equilibria—outcomes where no party’s gain requires another’s loss, and where incentive structures remain stable because cooperation is more rewarding than exploitation.

Without AEE, belief systems tend to calcify. They resist contradiction, reward conformity, and spiral into confirmation bias feedback loops—where evidence is filtered, dissent is punished, and epistemic collapse becomes inevitable. This is how institutions lose public trust, how ideologies become brittle, and how individuals become trapped in echo chambers.

In short, AEE is about engineering truth under pressure. It transforms epistemology from passive reflection into active design, addressing gaps in fields like decision theory, systems engineering, and public discourse.

Beyond its disciplinary roots, AEE also builds on specific frameworks that readers may recognize from science, philosophy, and strategy.

How AEE Relates to Existing Frameworks

AEE builds on, but also extends beyond, several established approaches. For readers who want to explore these traditions in more depth, see Bayesian epistemology [^1], Popper’s falsificationism [^2], red teaming in cybersecurity [^3], and recent work on epistemic engineering in systemic cognition [^4].

Bayesian Epistemology: Bayesian reasoning updates beliefs based on probabilities and new evidence. AEE incorporates this spirit of revision but expands it into a systems discipline—not just updating numbers but modularly disassembling and reconstructing entire belief architectures.

Falsification (Karl Popper): Popper argued that scientific claims must be falsifiable. AEE adopts falsifiability as a core principle but operationalizes it into a repeatable loop: frame, disassemble, stress-test, reconstruct. Where Popper focused on science, AEE applies the same rigor to everyday reasoning, institutions, and public discourse.

Red Teaming (Cybersecurity & Military Strategy): Red teaming stress-tests systems by simulating adversarial attacks. AEE generalizes this method to the epistemic domain: every belief is subjected to adversarial modeling, contradiction hunts, and scenario simulations. The goal is not just defense, but antifragile growth.

AEE is not a rejection of these traditions as it’s their integration into a unified, teachable protocol. It takes the precision of Bayesian updating, the rigor of Popperian falsifiability, and the resilience of red teaming, and fuses them into a mindset and method for engineering truth in adversarial environments.

AEE as a Mindset

AEE isn’t just a discipline, it’s a cognitive posture. It rewires how individuals engage with reality, replacing comfort-first reflexes with truth-first protocols. AEE trains the mind to treat beliefs as tools, not identities, and contradiction as a feature, not a threat.

Where most people default to preserving coherence, AEE defaults to falsifiability. Where others defend beliefs, AEE disassembles and rebuilds them. It’s not just what you believe, but also how you process belief itself.

This mindset includes:

Falsifiability-first reasoning: Every belief must be vulnerable to revision.

Modular cognition: Beliefs are composable and replaceable, not sacred.

Adversarial curiosity: Strong counterarguments are welcomed, not avoided.

Scenario-driven thinking: Claims are tested against edge cases, incentives, and moral coherence.

To adopt AEE is to play epistemic poker with your cards face up. Not to win arguments, but to build truth. It’s a mindset that transforms belief from a static possession into a dynamic system that’s engineered for clarity, resilience, and public accountability.

This isn’t to say that other mindsets, like intuition, are less important. Rather, AEE acts as a bridge: it provides a way to legitimize those intuitions and share them with others without falling back on “trust me, bro.”

📖Intuition + AEE in Action

Imagine a startup founder who has a strong gut feeling: “This new product feature will be a hit with customers.”

On its own, that intuition is valuable but hard to communicate and it risks sounding like “trust me, bro.” With AEE, the intuition becomes a testable, sharable claim:

Frame → “If we launch this feature, at least 30% of our current users will adopt it within three months.”

Disassemble → What does “adopt” mean, daily use, paid upgrade, or positive feedback? → Which users are we targeting, new sign‑ups, power users, or casuals?

Stress‑test → What if adoption is only 10%? → What if it cannibalizes existing features? → What if competitors launch something similar first?

Reconstruct → “The feature could succeed if we pilot it with a subset of power users, measure adoption against a 30% benchmark, and compare churn rates before scaling.”

Outcome: The founder’s intuition isn’t dismissed but rather it’s translated into a falsifiable, modular plan that the whole team can evaluate. AEE doesn’t replace intuition; it bridges it into collective reasoning so others can engage, critique, and improve it.

Try It Out

Take one of your own beliefs and run it through the protocol loop. See how it holds up and rebuild it stronger. Now imagine a world where we all treated beliefs this way. Inviting critique to make them resilient, instead of defending brittle assumptions and spiraling into confirmation bias.

That’s the world AEE is designed to build and not just for us, but for the generations who will live with the systems we leave behind.

References

[^1]: Stanford Encyclopedia of Philosophy. (2004/updated). Bayesian Epistemology. https://plato.stanford.edu/entries/epistemology-bayesian/

[^2]: Popper, K. (1959). The Logic of Scientific Discovery. London: Routledge. See also: Stanford Encyclopedia of Philosophy — Karl Popper. https://plato.stanford.edu/entries/popper/

[^3]: National Institute of Standards and Technology. (2008). NIST SP 800-115: Technical Guide to Information Security Testing and Assessment. https://csrc.nist.gov/publications/detail/sp/800-115/final U.S. Army University of Foreign Military and Cultural Studies (UFMCS). (2018). The Red Team Handbook (Version 9). https://usacac.army.mil/organizations/ufmcs MITRE ATT&CK. Red Team Resources Overview. https://attack.mitre.org/resources/

[^4]: Cowley, S. J., et al. (2022). How systemic cognition enables epistemic engineering. Frontiers in Artificial Intelligence, 5, 960384. https://www.frontiersin.org/articles/10.3389/frai.2022.960384/full